There is no Objective Probability of Fine Tuning

And how this blunts Fine-Tuning Arguments

Some proponents of Fine-tuning arguments give their arguments in strong Bayesian form. The idea behind the inference schema is that for an idealised rational agent (something we should all strive to be), the conditional probability of Theism given Fine-tuning will be sufficient to incline full-blown, rationally justified belief in Theism.

Fine-tuning of the universe for life, in the sense proponents of the argument for Theism use it, means something like that of all the ways the universe could have been (i.e. if various fundamental parameters that feature in models of physics were different from what they are), very few of those ways are life-permitting. This striking fact, that our universe is life-permitting, stands in need of an explanation. It is then argued that the conditional probability of Fine-Tuning given Theism (i.e. God existing) is quite high — this is because God is an explanatory entity that both has the power (vis-à-vis omnipotence) and the rational capacities and desires to direct his efforts towards creating life like us. If we compare this to naturalism (or various atheisms), then there is no explanatory entity that raises our expectation of a finely tuned universe, so the likelihood is very low.

Strong Bayesian formulations of the argument take these considerations as premises and then advise us on how we should update using Bayes’s formula:

In this case ‘A’ would be our relevant hypothesis, and ‘B’ our explananda (Fine-tuning). If we perform the relevant updates, given how much higher the likelihood of P(Fine-tuning | Theism) is that P(Fine-tuning | Naturalism (or atheism)) then we are left with a posterior probability of Theism which is very high, warranting belief. Or so it is claimed.

You will notice that for all the talk of Bayesianism here, we haven’t actually used any numbers. This is a problem endemic to philosophy where frequently the rigour and authority of fields like physics or mathematics will be claimed by adorning the garb of equations, whilst the work that the formalisms are supposed to do is gestured at and hand waved away.

There are several interpretations of probability, and which one you buy into will inform the scope and limits of this argument. I shall not talk about subjective interpretations here because under subjective accounts an agent’s credences can take on any values such that it isn’t clear whether or not their credences will align with those provided in the argument required to establish the conclusion. If they do differ in such a way, on a subjective account that’s the end of the story — though I can unpack that and strategies FTA proponents take to try and get around that in another post. Here, I shall focus on Objective accounts of probability, as when these accounts are appealed to, the probabilities given in the argument are supposed to be normatively binding for the recipient of the argument.

There are several probabilities used in the argument. The one I shall focus on is the marginal probability of Fine-tuning; P(FT). I do also happen to believe there are problems for the other required probabilities, though here I focus on one.

FTA Proponents give different lists of parameters tweaked in FTA’s. The number shall remain irrelevant for the point I intend to make so first let us consider a single parameter which varies. Now Im not certain whether or not we would include negative values for parameters as I am not a physicist, so I shall restrict myself to positive values. So, for a given parameter we are considering a probability with an upper bound of infinity.

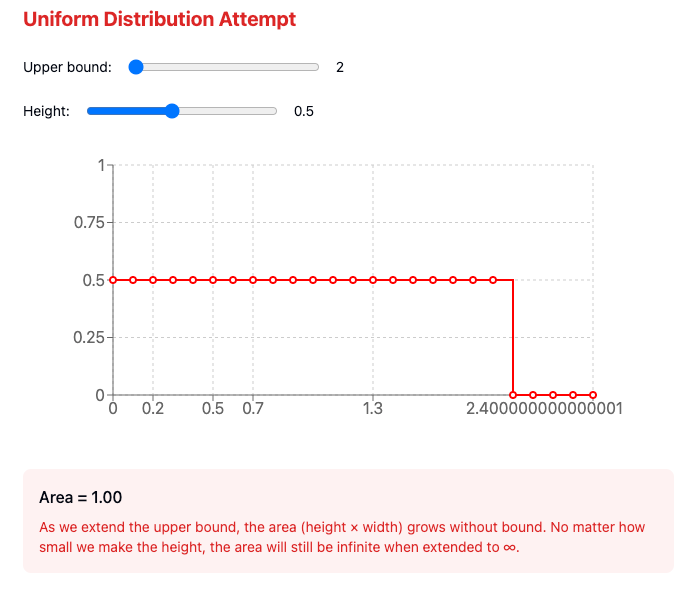

Given that “fine-tuning” is not a well-defined process, there isn’t a good reason to pick any particular probability distribution function (PDF) to distribute over our parameter. FTA proponents usually apply something like the principle of indifference and argue that we ought to apply a uniform distribution over our variations of the parameter. The problem here is that by the axioms of probability theory our credences cannot exceed Probability of 1, that means the area under the curve of a valid PDF must always add up to 1.

The problem for Fine-tuning is that with a uniform distribution, when our parameter is varied over an interval [0, +infinity), regardless of how low we assign our probability at each coordinate along the x-axis we end up with an area of some discrete value * infinity, which comes out as infinite (very much not 1)

This argument is made clearly in a quite old paper by the McGrews1, where, in an historic first, I find myself agreeing with them. The key points in their paper are:

To calculate probabilities over infinite ranges, we need a "normalizable" probability distribution (one that integrates to 1)

In the fine-tuning argument, some try to say "all values are equally likely" (uniform distribution)

But as we just saw, you can't have a uniform distribution over [0,∞) because it won't normalize (integrate to 1)

Therefore, you can't calculate meaningful probabilities under the assumption that "all values are equally likely"

If this is correct, then we cannot meaningfully provide a value for the marginal probability of Fine-tuning and hence we cannot conclude anything with respect to “updating” on Fine-tuning evidence.

A similar point is made in a really good documentary on Fine-tuning that I recommend people interested watch2

At 10:32 Graham Priest says (with my paraphrase in quotes):

If you assign them [your infinite parameter variations] equal probability, if you give them any finite probability, they’re got to add up to one. [But] if you take any infinite number of a finite quantities they add up to something that’s infinite.

If you want to normalise and make sure you get a value that’s between 0 and 1, you’re got to say that each thing has zero probability. But then you hit the problem of dividing by zero [in the denominator of Bayes Theorem, as 0 * infinity is 0]. But on the other hand if you give them a probability of something that’s greater than zero, then they add up to infinity. So either it’s zero, or it’s infinite. And neither of those is going to work.

There are further debates had around Fine-tuning arguments, around objective vs subjective interpretations of probability and other forms of inference with which one can argue for Fine-tuning. However, short of providing some principled distribution/measure with an area that integrates to a finite number that can be normalised to 1, the strong Bayesian formulation of this argument is dead in the water.

I shall discuss some of these approaches and the many interesting tangents in epistemology and probability theory that come up in these discussions in future posts. Needless to say, if you enjoy this content then please subscribe to my substack, like, comment and share the article and consider becoming a donor.

Timothy McGrew, Lydia McGrew, Eric Vestrup, Probabilities and the Fine‐Tuning Argument: a Sceptical View, Mind, Volume 110, Issue 440, October 2001, Pages 1027–1038, https://doi.org/10.1093/mind/110.440.1027

SkyDive Phil, November 2022, Physicists and Philosophers debunk The Fine tuning Argument, YouTube